Export BeautifulSoup to CSV

Overview

Welcome to the ultimate guide on leveraging the power of BeautifulSoup for data extraction and CSV exports. As the digital era flourishes, the ability to organize, analyze, and interpret web data has become invaluable. Here, we’ll explore the robust BeautifulSoup library, its seamless integration with CSV exports, and the myriad of benefits they offer—from enhanced data manipulation and improved reliability to better organization and insights into market trends. Whether you're conducting market research or streamlining your data workflow, exporting BeautifulSoup to CSV is a game-changer. This page will not only cover the essentials of what BeautifulSoup is and the step-by-step process of exporting to a CSV file, but also the practical use cases for such exports. Additionally, we'll introduce Sourcetable, an alternative to CSV exports for BeautifulSoup, and answer frequently asked questions about the entire data scraping and exporting journey. Dive in to transform your data extraction into actionable insights.

What is BeautifulSoup?

Beautiful Soup is a Python library designed to facilitate quick turnaround projects such as web scraping by parsing HTML and XML documents. It operates by creating a parse tree from these documents, which can then be used to extract data efficiently. This process is particularly useful for tasks that involve sifting through 'tag soup'—a term that refers to structurally or syntactically incorrect HTML written for a web page.

The library offers a suite of features that make it a powerful tool for developers. It automatically handles encoding by converting documents to Unicode and ensuring that the output is in UTF-8, reducing the complexity associated with various encodings. Additionally, Beautiful Soup integrates with multiple Python parsers like lxml and html5lib, providing flexibility and the ability to try different parsing strategies depending on the requirements of the project.

Moreover, Beautiful Soup is recognized for its ability to parse and navigate the parse tree of a document, as well as modify and reformat the parse tree as needed. It saves programmers hours or days of work by providing idiomatic ways of navigating, searching, and modifying the parse tree. The library is not only good for parsing but also for conducting complex tree traversal, which is essential for extracting data and converting it into a structured form that can be used for various applications.

Exporting BeautifulSoup Data to a CSV File

Installing BeautifulSoup

To begin extracting data using BeautifulSoup, you must first install the BeautifulSoup4 library. This is accomplished by running the command pip install beautifulsoup4 in your terminal or command prompt, ensuring that the library is available in your Python environment.

Extracting Data Using Nested Loops

With BeautifulSoup installed, you can proceed to extract data from web pages. This involves writing Python code that utilizes nested for loops to navigate through and parse the hierarchical structure of HTML elements on a webpage. The nested loops are essential for drilling down into the HTML to reach the specific data you want to extract.

Running the Code in an IDE

Using an Integrated Development Environment (IDE) like PyCharm can facilitate the development of your Python script. After writing the code that includes the nested for loops to extract the desired data, you can run the script within the IDE. The extracted data will then be processed and output in CSV format.

Exporting Data to CSV Format

The final step in the data extraction process is to export the data into a CSV file. The code you write in Python will handle the conversion of the extracted data into CSV format, which is a widely used format for storing tabular data and can be easily imported into spreadsheet applications or processed by other software.

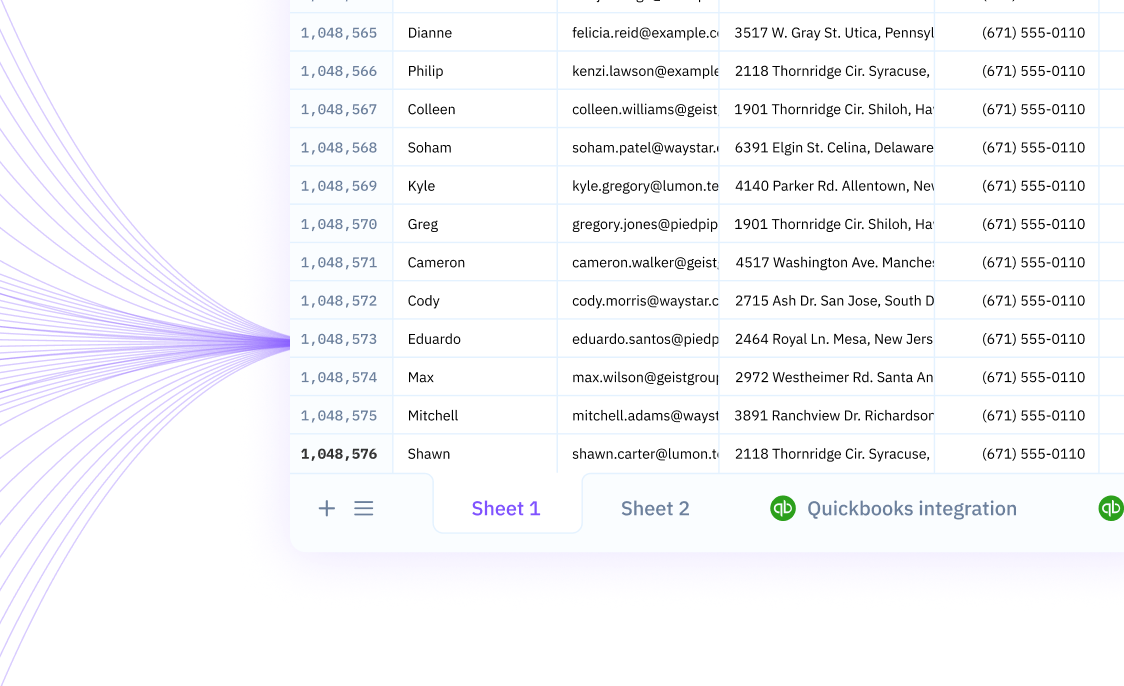

Optimize Your Data Workflow with Sourcetable

When working with data extraction from web sources, such as using BeautifulSoup, the traditional approach often involves exporting data to a CSV file and then importing it into a spreadsheet program. However, this method can be cumbersome and time-consuming. Sourcetable offers a more streamlined and efficient solution for managing your data. By using Sourcetable, you can directly import BeautifulSoup data into a spreadsheet, bypassing the need for a CSV intermediary. This not only saves time but also reduces the potential for errors that can occur during the export/import process.

Sourcetable syncs your live data from almost any app or database, including the outputs from web scraping tools like BeautifulSoup. Its capability to automatically pull in data from multiple sources into one centralized location allows for powerful query and analysis in a familiar spreadsheet environment. The automation features of Sourcetable eliminate the repetitive task of manual data entry, enhancing productivity and ensuring that your data is always up-to-date for real-time business intelligence. Embrace the agility and accuracy that Sourcetable provides, and revolutionize the way you handle web-extracted data.

Common Use Cases

-

Use case 1: Extracting job listings from a website and saving them in a CSV file for further analysisB

-

Use case 2: Scraping product information from an e-commerce site like carousell.com and storing it for competitive pricing analysisB

-

Use case 3: Compiling a list of links from a webpage into a CSV for a link audit or SEO purposesB

-

Use case 4: Gathering data on books and stationary from carousell.com for market researchB

Frequently Asked Questions

Can BeautifulSoup be used to extract data into CSV files?

Yes, you can extract data into CSV files after scraping data with BeautifulSoup.

What are the steps to export data from BeautifulSoup to CSV using Pycharm?

First, open Pycharm IDE. Then, create a new project, write or copy the code, and run the project. The data will appear in the project in CSV format.

Do I need to use Pycharm to write the code for exporting data from BeautifulSoup to CSV?

Yes, you need to open Pycharm to write the code.

How do I know if my data has been successfully exported to CSV from BeautifulSoup?

When you run the code in Pycharm, the extracted data will appear in the project in CSV format.

Conclusion

BeautifulSoup, a powerful Python library, is adept at extracting data from web pages and writing it to CSV files. By utilizing Pycharm and following a step-by-step process, one can easily create a project to run the code that extracts data and exports it into a CSV format. The tutorial by Havid Riyono further simplifies this task by guiding users through exporting data to CSV files, specifically for a carousell project within Pycharm that targets book and stationary categories on carousell.com. While this method is effective, an even more streamlined approach is available with Sourcetable, which allows for direct importation of data into a spreadsheet, bypassing the need for intermediate CSV export. To enhance your data management and efficiency, sign up for Sourcetable and start importing data directly into your spreadsheets.