Export BigQuery to CSV

Overview

Welcome to our comprehensive guide on how to export data from BigQuery to a CSV file—a process paramount for data analysts and scientists wishing to leverage the versatility of CSV format for data portability and ease of use. CSV files are particularly invaluable when you need to import your data into spreadsheets for further analysis or presentations. In this guide, we will delve into what BigQuery is, the steps to efficiently export BigQuery tables to CSV files, the practical applications of this skill, and an alternative method using Sourcetable for instances where CSV might not be the optimal choice. Additionally, we have included a helpful Q&A section to address common questions about exporting BigQuery data to CSV.

Exporting BigQuery Data to CSV

Using the EXPORT DATA Statement

To export the results of a query to a CSV file in Google Cloud Storage, you can use the EXPORT DATA statement within BigQuery. This method exports table data using SQL and requires specifying the data format as CSV. Note that the EXPORT DATA statement does not guarantee the file size or the order of the data in the exported CSV file. If you're exporting more than 1 GB of data, you must use a wildcard (*) in the destination URI to generate multiple sharded files.

Using BigQuery's Web UI

BigQuery's Web UI provides an intuitive interface for exporting data to CSV files. When using the Web UI, you simply select the table you wish to export, choose the export format as CSV, and provide the destination Cloud Storage path. Remember that the CSV files exported will not contain a header by default and may need to be compressed using GZIP, especially if you're exporting large datasets.

Using the bq Command-Line Tool

The bq command-line tool offers a convenient way to export data to CSV by using the bq extract command. This tool allows you to define the Cloud Storage destination, the export format, and whether the CSV file should be compressed. It's essential to ensure that the destination Cloud Storage bucket location matches the location of the BigQuery dataset.

Using the BigQuery API

The BigQuery API enables programmatic export of data to CSV files by generating an extract job. You can check the status of the extract job using the job's unique ID. The API, along with client libraries, can also submit jobs to export data. Various functions like extractTableToCsv demonstrate the process of writing a table to Cloud Storage as a CSV file in different programming languages like PHP and Node.js.

Using Client Libraries

Client libraries provide a way to export data to CSV files using programming languages such as PHP and Node.js. Functions like extractTableToGCS (Node.js) and extractTable (PHP) illustrate how to write a table to Cloud Storage as a CSV file. These libraries abstract the underlying API calls and offer a more streamlined developer experience.

Using Dataflow

For situations where you need to export large datasets or perform additional data processing, you can use a service like Dataflow. Dataflow can read data directly from BigQuery and write it to CSV files in Cloud Storage. This method avoids manual exports and is documented as part of BigQuery I/O in the Apache Beam documentation.

Monitoring and Quotas

Export jobs, including those that export to CSV, are subject to quotas and limitations. You can monitor the usage of export jobs and check job statuses using the INFORMATION_SCHEMA.JOBS view. To keep track of the amount of data being exported and stay within budget, you can set up Cloud Monitoring alert policies.

Considerations for Storage and Cost

When exporting data to CSV, the exported data is stored in Cloud Storage and is subject to the applicable Cloud Storage rates. To manage costs effectively, it's important to consider the size of the exported files and the storage class of the Cloud Storage buckets being used. Data export pricing information is detailed on the BigQuery pricing page.

Import BigQuery Data into Spreadsheets Using Sourcetable

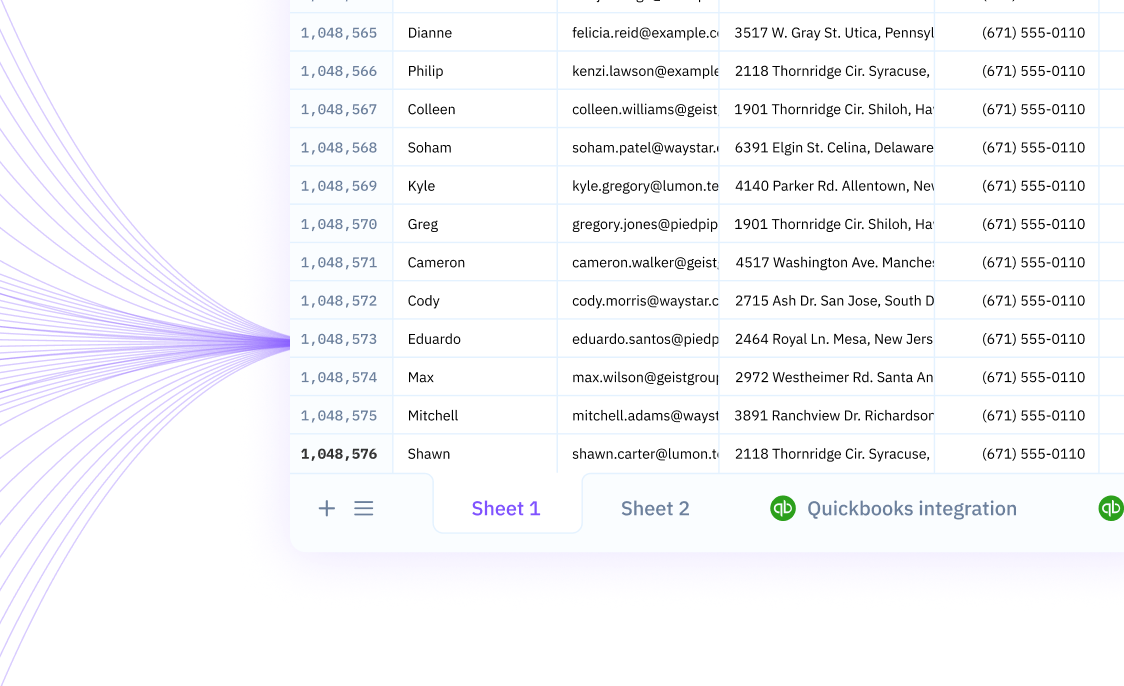

With Sourcetable, you have the advantage of a seamless and dynamic integration of BigQuery data directly into your spreadsheets. Unlike the traditional export to CSV and subsequent import to a spreadsheet program, Sourcetable syncs your live data from BigQuery, ensuring that your spreadsheet always reflects the most current data without the need for manual updates. This real-time synchronization is not only a huge timesaver but also reduces the risk of human error associated with manual data handling.

Moreover, Sourcetable is designed to work with the complexity of BigQuery data while offering the simplicity and familiarity of a spreadsheet interface. This fusion of advanced database management with user-friendly operations allows for a more efficient workflow, especially for those who are already accustomed to spreadsheets but require the robust analytics capabilities of BigQuery. Sourcetable serves as a powerful tool for automation and business intelligence, enabling users to effortlessly pull in and query data from multiple sources, all within a single platform.

Common Use Cases

-

Exporting data to Cloud StorageB

-

Processing data for nightly batch jobsB

-

Creating daily backupsB

-

Exporting a daily snapshot to Cloud Storage for backup and archival purposesB

Frequently Asked Questions

How much data can BigQuery export to a single CSV file?

BigQuery can export up to 1 GB of data to a single CSV file.

What happens if I need to export more than 1 GB of data from BigQuery to CSV?

If you are exporting more than 1 GB of data, you must export your data to multiple CSV files. BigQuery supports exporting to multiple files using a wildcard operator in the URI.

Can I export nested and repeated data to CSV format from BigQuery?

No, BigQuery does not support exporting nested and repeated data in CSV format.

Where can I export my BigQuery table data to in CSV format?

The only supported export location for BigQuery table data in CSV format is Google Cloud Storage.

How can I ensure the order of the exported table data in CSV from BigQuery?

To guarantee the order of the exported table data, you should use the EXPORT DATA statement.

Conclusion

Exporting data from BigQuery to CSV is a straightforward process that can be customized to meet your data needs. Whether you're dealing with small datasets that can be exported to a single file or larger datasets that require sharding across multiple files, BigQuery provides the flexibility to export to Cloud Storage using the EXPORT DATA statement or the bq extract command. Remember to utilize the wildcard URI for datasets over 1 GB and disable the CSV header if necessary. EXPORT and EXTRACT jobs are asynchronous, subject to quotas and limitations, and can be monitored for successful completion. For those looking to streamline their workflow even further, consider using Sourcetable. Instead of exporting to CSV, Sourcetable allows you to import data directly into a spreadsheet, simplifying the data transfer process. Sign up for Sourcetable to get started and take your data management to the next level.